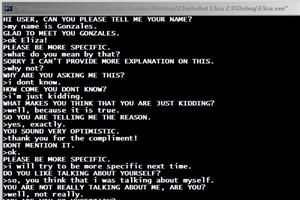

Eliza chatbot history12/29/2023 Weizenbaum intended ELIZA to show how shallow computerized understanding of human language was. For example, if your text contained the word “mother,” ELIZA might respond, “How do you feel about your mother?” If it found no keywords, it would default to a simple prompt, like “tell me more,” until it received a keyword that it could build a question around. ELIZA would scan what it received for keywords that it could flip back around into a question. To communicate with ELIZA, people would type into an electric typewriter that wired their text to the program, which was hosted on an MIT system. “Maybe if I thought about it 10 minutes longer,” Weizenbaum wrote in 1984, “I would have come up with a bartender.” Named after the American psychologist Carl Rogers, Rogerian (or “person-centered”) psychotherapy was built around listening and restating what a client says, rather than offering interpretations or advice. That’s why the idea of modeling ELIZA after a Rogerian psychotherapist was so appealing - the program could simply carry on a conversation by asking questions that didn’t require a deep pool of contextual knowledge, or a familiarity with love and loneliness. That kind of understanding is in principle impossible for the computer.” Love and loneliness have to do with the deepest consequences of our biological constitution. “There are aspects to human life that a computer cannot understand - cannot,” Weizenbaum told the New York Times in 1977. Weizenbaum did not believe that any machine could ever actually mimic - let alone understand - human conversation. ELIZA showed us just enough of ourselves to be cathartic The risk, as Weizenbaum saw, is that without wisdom and deliberation, we might lose ourselves in our own distorted reflection.

If a simple academic program from the ’60s could affect people so strongly, how will our escalating relationship with artificial intelligences operated for profit change us? There’s great money to be made in engineering AI that does more than just respond to our questions, but plays an active role in bending our behaviors toward greater predictability. Bing might be the largest mirror humankind has ever constructed, and we’re on the cusp of installing such generative AI technology everywhere.īut we still haven’t really addressed Weizenbaum’s concerns, which grow more relevant with each new release. Today’s chatbots reflect our tendencies drawn from billions of words. Weizenbaum was so disturbed by the public response that he spent the rest of his life warning against the perils of letting computers - and, by extension, the field of AI he helped launch - play too large a role in society.ĮLIZA built its responses around a single keyword from users, making for a pretty small mirror. People were entranced, engaging in long, deep, and private conversations with a program that was only capable of reflecting users’ words back to them. Ironically, though Weizenbaum had designed ELIZA to demonstrate how superficial the state of human-to-machine conversation was, it had the opposite effect. If you told it a conversation with your friend left you angry, it might ask, “Why do you feel angry?” The process was simple: Modeled after the Rogerian style of psychotherapy, ELIZA would rephrase whatever speech input it was given in the form of a question. In 1966, MIT computer scientist Joseph Weizenbaum released ELIZA (named after the fictional Eliza Doolittle from George Bernard Shaw’s 1913 play Pygmalion), the first program that allowed some kind of plausible conversation between humans and machines.

In fact, it’s been there since the introduction of the first notable chatbot almost 50 years ago.

And that shouldn’t have been surprising - chatbots’ habit of mirroring us back to ourselves goes back way further than Sydney’s rumination on whether there is a meaning to being a Bing search engine. Which is to say, it reflects our online selves back to us. Instead, Sydney’s outbursts reflect its programming, absorbing huge quantities of digitized language and parroting back what its users ask for. It didn’t take long for Microsoft’s new AI-infused search engine chatbot - codenamed “Sydney” - to display a growing list of discomforting behaviors after it was introduced early in February, with weird outbursts ranging from unrequited declarations of love to painting some users as “ enemies.”Īs human-like as some of those exchanges appeared, they probably weren’t the early stirrings of a conscious machine rattling its cage.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed